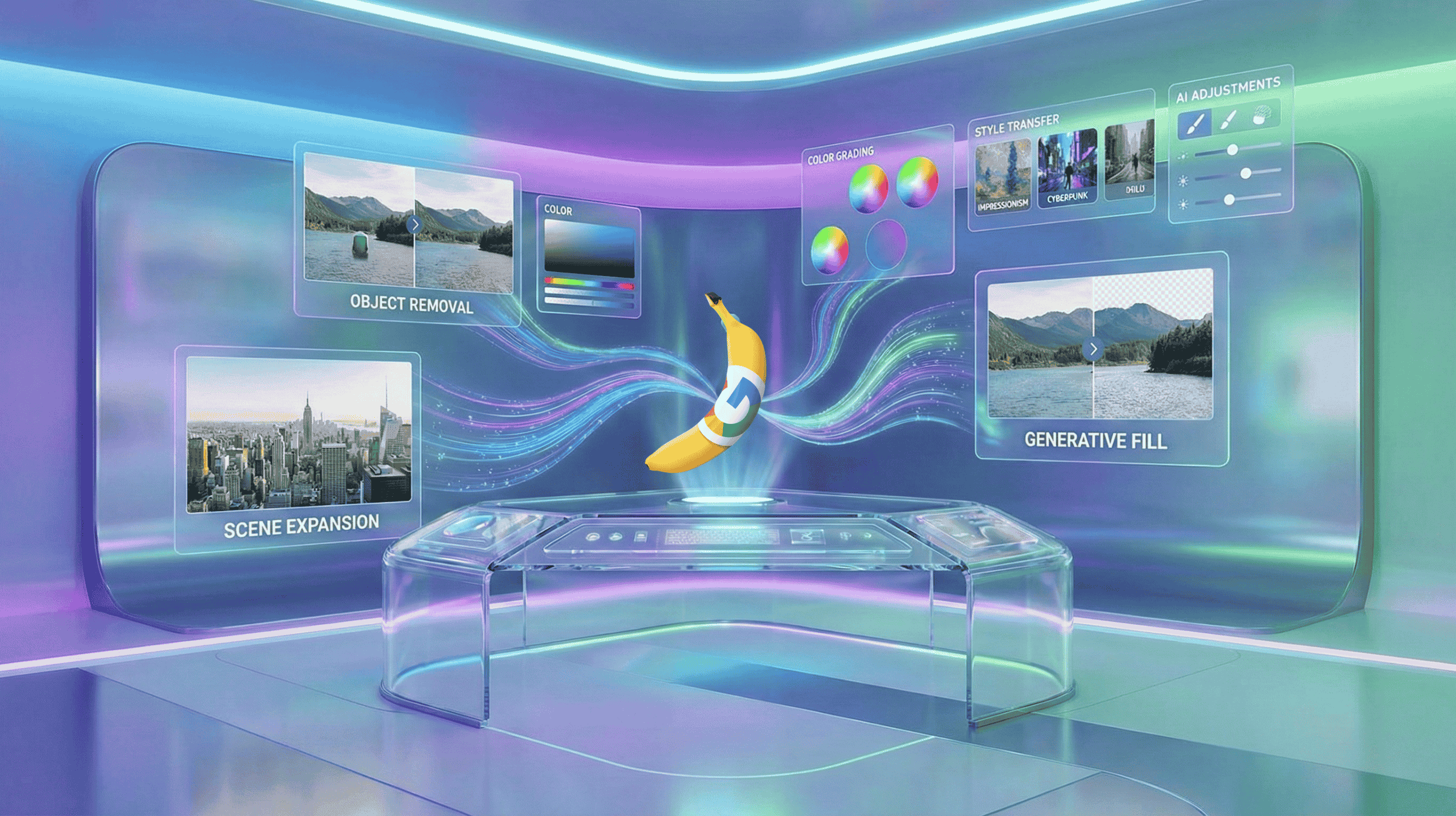

Google’s Nano Banana Pro transforms image editing into a fully immersive AI-powered creative control room.

Google’s Nano Banana Pro model turns AI image editing into something closer to a creative control room than a novelty filter. It matters now because it fuses high‑end visual quality with Gemini 3 Pro’s reasoning, which lets people and teams move from rough ideas to production‑ready visuals with far less manual work.

What Nano Banana Pro Actually Is

Nano Banana Pro is Google DeepMind’s new Gemini 3 Pro Image model, built as the successor to the earlier Nano Banana that shipped on Gemini 2.5 Flash Image. It is available through the Gemini API, Google AI Studio, and Vertex AI, which means the same model that powers playful edits in consumer apps can also sit behind enterprise workflows.

Under the hood, it pairs a high‑fidelity image generator with Gemini 3 Pro’s multimodal reasoning and real‑world knowledge, so the system can not only render scenes but also interpret prompts that include structure, data, and constraints. That is a shift from “draw what I say” toward “understand what I am trying to achieve, then design something that fits.”

From Fun Edits To Studio Controls

Earlier Nano Banana features focused on creative restyling, character consistency, and local edits on a canvas, which made it ideal for casual users and hobbyists. Nano Banana Pro keeps that playfulness but adds studio‑grade controls over lighting, camera angle, focus, color grading, and aspect ratios, with resolutions up to 2K and 4K.

Instead of a one‑shot image, you can now select, refine, and transform specific regions, change day to night, add bokeh, or reframe a shot while preserving composition. In practice, this makes the model feel less like a magic button and more like a very fast, very patient assistant director of photography.

Text On Images Finally Grows Up

One of the biggest leaps is text rendering. Nano Banana Pro is designed to produce legible, layout‑aware text directly inside images, from simple taglines to dense paragraphs on posters or infographics. Benchmarks and early reports highlight lower text error rates across multiple languages and more consistent typography compared with earlier models.

Because Gemini 3 Pro Image can reason about structure, it can place text in diagrams, charts, and multi‑panel layouts with attention to spacing and hierarchy, not just decoration. For designers and marketers, that unlocks mockups that are much closer to final production assets, instead of the usual “lorem ipsum” placeholders.

Consistency Across People, Products, And Panels

Nano Banana Pro lets users blend up to 14 images in a single composition while maintaining resemblance and identity for up to 5 people. That consistency matters when you need the same character, product, or logo to show up across multiple frames, storyboards, or campaign variations.

This also applies to style and layout. You can take texture, color, or visual style from a reference photo and apply it across a series of images, which keeps branding coherent without redrawing everything from scratch.

In effect, Nano Banana Pro acts like a style engine that remembers what your world is supposed to look like.

Gemini 3 Pro Reasoning Behind The Scenes

What sets this model apart is how deeply it leans on Gemini 3 Pro’s reasoning and grounding features. Gemini 3 can call out to Google Search to fetch real‑time facts, then use that information to generate or edit images that stay aligned with current data.

This is especially important for infographics, dashboards, or educational visuals that need factual accuracy, not just aesthetics.

Gemini 3 Pro also introduces controls such as media resolution, which governs how much visual detail the model allocates to reading text or small objects in inputs, and “thought signatures” that preserve visual context over multi‑turn editing sessions. That means you can have a back‑and‑forth conversation like “make the background sunset, now move the camera higher, now add captions in Spanish” and the model keeps track of the evolving scene.

Where You Will See It First

Google is wiring Nano Banana Pro into multiple products at once. It is in the Gemini app for consumer image creation and editing, with a free entry path for users who want to try it, and deeper access for paid tiers such as Google AI Plus, Pro, and Ultra.

It is also rolling out in Google AI Studio and Vertex AI, where developers and enterprises can integrate it into their own tools and pipelines.

Within Workspace, Nano Banana Pro is surfacing in Slides and Vids, where users can generate or refine images with multi‑turn prompts and higher precision for presentations and video storyboards. This dual presence, in consumer surfaces and enterprise platforms, hints at Google’s strategy to normalize AI‑assisted visuals across everyday communication and professional content creation.

New Patterns In Creative Work

For designers, Nano Banana Pro can collapse several early phases of a project. Instead of sketching wireframes, drawing placeholders, and waiting for a data team to supply charts, a single person can prompt the model to generate a full spread with charts, text, and imagery that already follow a chosen aesthetic.

Revision loops then become about taste, nuance, and correctness, not about raw production.

For non‑designers, the tool lowers the barrier to “good enough” visual communication. A product manager or educator can describe the structure of a diagram or storyboard in natural language and get something visually coherent, even if they never mastered layout tools.

That democratization is powerful, but it also raises questions about where professional craft sits when baseline quality is so easily automated.

Enterprise And Developer Implications

On the enterprise side, Nano Banana Pro is clearly aimed at structured workflows rather than pure art. Vertex AI and the Gemini API allow teams to embed the model into systems that generate marketing assets, product imagery, documentation visuals, dashboards, and even UI mockups in response to data or triggers.

That means image generation can be chained to other tools, where a language model drafts text, then Nano Banana Pro converts it into diagrams or annotated screenshots.

Developers also benefit from the model’s ability to handle multimodal inputs and outputs, including images with rich text, while respecting constraints like aspect ratio, resolution, and brand style. This makes it easier to build tools where users can upload references, specify layout rules, and let the system handle the hard visual work in the background.

Guardrails, Risk, And Attribution

Like other Gemini image models, Nano Banana Pro content carries safeguards and watermarking. Earlier Nano Banana releases used SynthID digital watermarks to mark AI‑generated images, and that approach carries forward as Google expands its image tools.

This kind of labeling is becoming more important as AI‑made visuals enter newsrooms, ads, and public information campaigns.

There are still limits. Model cards and documentation note that even Gemini 3 Pro Image can struggle with very small faces, fine details, and perfectly accurate spelling in challenging scenarios, and that some use cases remain restricted for safety reasons.

For organizations adopting it, due diligence around misuse, bias, and intellectual property remains essential, even as the tooling becomes more capable.

Why This Launch Matters Now

The timing of Nano Banana Pro lines up with a broader shift in AI from “toy” demos toward integrated, production‑grade systems. Google is positioning this model as infrastructure for visual reasoning in the same way Gemini 3 Pro is infrastructure for text and multimodal analysis.

Competing models can generate striking art, but Nano Banana Pro is explicitly framed as an engine for charts, layouts, diagrams, and multilingual campaigns that need to be both beautiful and correct.

This also moves the center of gravity for image editing. Instead of opening a traditional editor and manually nudging sliders, more work starts with a prompt, a reference set, and an outcome in mind, then uses AI for iterative refinement.

The more people grow comfortable with that pattern, the more it reshapes expectations of how fast visual ideas should move from thought to screen.

The Bigger Picture For Visual AI

Nano Banana Pro shows what happens when image models inherit the reasoning, grounding, and product integration of a flagship LLM. It turns AI image editing into something closer to a shared workspace, where prompts, data, and design constraints all sit in the same conversation.

If that trajectory holds, the next competitive edge will not just be which model can draw the prettiest picture, but which one can collaborate across tools, data sources, and teams without losing context. In that sense, Nano Banana Pro is less a quirky Google project with a playful name and more a signal of where everyday visual work is heading.