Unauthorized AI tools are growing fast — and by 2030, they may put nearly half of all companies at significant risk.

Picture a team crunching through a high-stakes project, the deadline looming. A few employees quietly turn to ChatGPT and similar tools, finding shortcuts that boost productivity.

The problem? They are using AI apps never approved by IT, pushing sensitive data through the digital equivalent of a side door. That side door, often called shadow AI, is now one of cybersecurity’s biggest unknowns.

Gartner’s latest prediction should jolt company leaders worldwide: by 2030, more than 40% of global organizations are expected to suffer security or compliance incidents caused by unauthorized AI tools, which experts call shadow AI.

As artificial intelligence weaves deeper into every industry, unsanctioned use is creating unseen risks that are now impossible to ignore.

What Is Shadow AI, And Why Is It Spreading?

Shadow AI refers to employees using AI-powered apps, like chatbots or code generators, without formal approval or oversight from IT or security teams. Think of it as shadow IT’s next wave: tech-savvy staff bringing new tools into workflows to solve problems faster or gain an edge.

Why does this matter now? Partly, it is the explosive growth of generative AI.

According to Gartner’s survey of cybersecurity leaders, nearly 69% of organizations suspect or have proof that employees are using prohibited AI tools at work. In some companies, those numbers are even higher.

IBM’s 2025 report found that shadow AI incidents account for 20% of all breaches and result in average costs that are $670,000 higher than standard incidents.

The Hidden Costs: Data Leaks, Compliance Trouble, and Technical Debt

Shadow AI is not just a technical issue; it is an organizational blind spot. What makes it risky? These unsanctioned AI apps can move sensitive information beyond protected networks.

For example, confidential business plans, customer data, or even proprietary code can be fed into public tools, where tracking and control disappear.

In a prominent case, Samsung banned generative AI at work after staff accidentally exposed internal source code through a popular chatbot.

Shadow AI raises the risk of intellectual property loss, regulatory violations, and data breaches. IBM reports that 65% of shadow AI incidents involve compromising customer personally identifiable information, far above global averages.

As a result, the financial and reputational damage can scale quickly: with each shadow AI breach, companies reportedly lose an average of $4.63 million, compared to $3.96 million for traditional incidents.

Shadow AI also fuels technical debt. Gartner warns that even sanctioned generative AI, if not properly managed, can saddle organizations with delayed upgrades and mounting maintenance costs by 2030.

Unmanaged technical debt can quietly undermine security and slow future innovation.

Why Is Shadow AI So Hard to Manage?

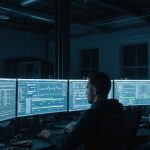

Part of the challenge is visibility. Employees often access AI apps through personal devices or encrypted web channels that bypass security tools.

Conventional monitoring and browser logs miss up to 70–80% of shadow AI activity, according to recent security research. In other words, most organizations are “flying blind”; they cannot see what is being shared, processed, or stored through unsanctioned AI.

The problem is not just rogue employees. As AI apps get easier to use, even executives and security professionals increasingly turn to these tools for convenience. The widespread nature means it is less about bad actors and more about systemic adoption outpacing oversight.

What Happens Next, and What Can Be Done?

The risk is not going away. Gartner expects that by 2027, up to 75% of employees will use unauthorized AI at work, up from 41% in 2022. This trend means organizations need more than just bans or technical blocks. Proactive governance becomes essential.

Experts recommend several steps:

- Build clear, enterprise-wide policies for AI tool usage, setting firm rules about what is permitted and where data can flow.

- Conduct regular audits for shadow AI activity and track incident metrics.

- Educate the workforce, at all levels, about the risks of dropping sensitive company data into external AI tools.

- Favor open standards and modular AI architectures, which help prevent vendor lock-in and keep options flexible as technology evolves.

- Incorporate generative AI risk evaluation directly into software assessment and procurement processes.

So What? Why This Matters for Everyone

The future will be shaped not only by how fast AI capabilities advance, but also by how wisely organizations manage their adoption. Shadow AI shows that innovation without clear boundaries can open pathways to both massive gains and critical risks.

For leaders, employees, and IT professionals alike, understanding and confronting shadow AI is not just a technical task; it is a test of strategic awareness and collective responsibility.

The next chapter of AI in business may be written by those who look beyond the immediate promise and ask, “What are we missing, and how do we close the gap?”